When Systems Start Thinking for Themselves

An essay on emergence, stewardship, and the quiet intelligence of structure

You look at a system that is technically sound, thoughtfully designed, and staffed by capable people, and yet it behaves in ways no one explicitly intended.

Decisions seem to repeat themselves. Certain outcomes appear again and again. Metrics begin to drive behavior in subtle ways. Conversations feel familiar even when the context changes. It can feel as though the system has developed a personality.

This is not failure. It is not incompetence. It is not a sign that someone did something wrong.

It is the moment when a system begins to exhibit its own internal logic.

This article is about that moment. It is about how systems begin to “think” for themselves, not in a human sense, but in a structural one. It is about how meaning, direction, and behavior emerge from repetition, feedback, and interaction. And it is about how we can learn to work with that reality rather than against it.

To understand this, we need to move beyond the idea that systems are static artifacts. We need to see them as living patterns.

Systems are not instructions. They are conversations.

Most systems begin with intention. A strategy is articulated. A framework is selected. A governance model is approved. Roles are defined. Metrics are chosen. Tools are configured.

At this stage, the system feels like an instruction manual. If people follow the steps, the outcomes should follow as well.

But once the system enters the real world, something changes.

People interpret rules. Data is emphasized or ignored. Certain actions are rewarded more quickly than others. Some signals travel faster than others. Over time, the system becomes less like a set of instructions and more like an ongoing conversation between structure and behavior.

This is where many misunderstandings arise.

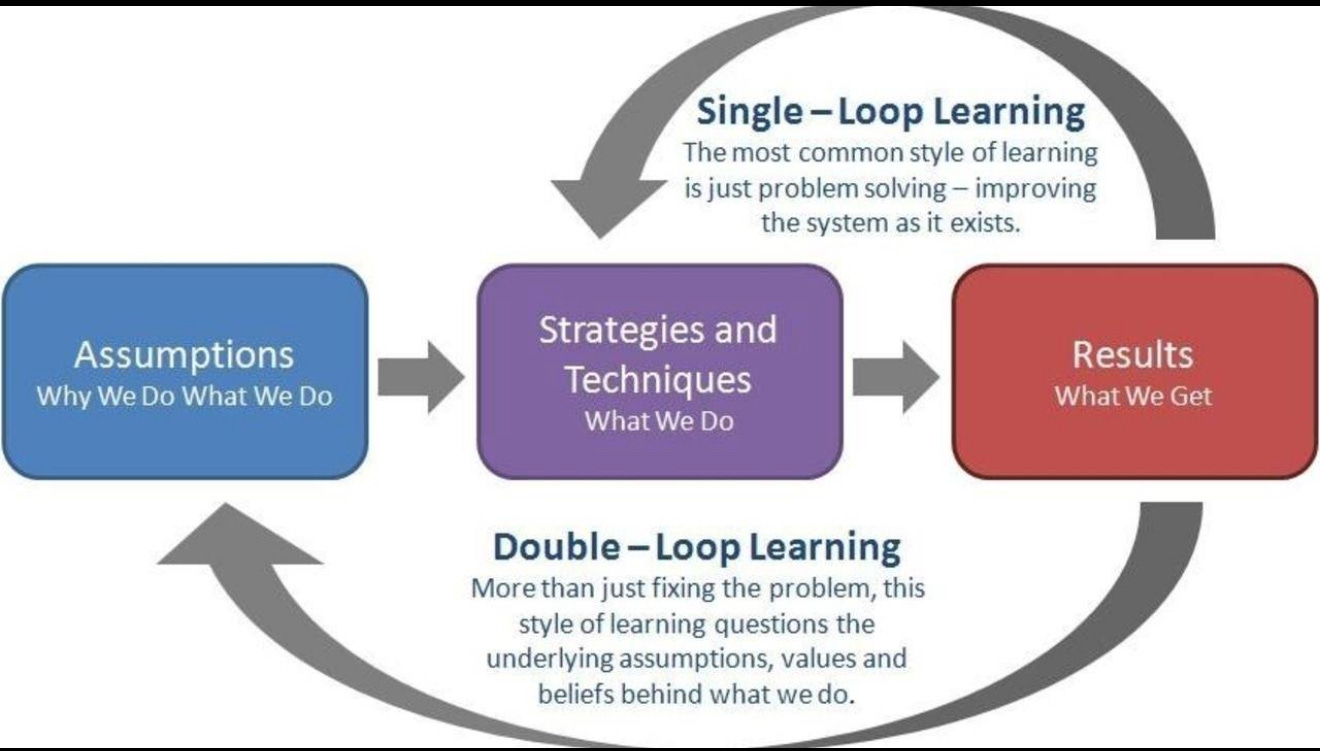

When outcomes diverge from intent, the instinct is often to intervene at the surface level. Add a control. Clarify a policy. Introduce a new metric. Redesign the workflow.

Sometimes this helps. Often it does not.

Because the system is not misbehaving. It is responding.

The quiet logic of feedback

Every system has feedback loops. Some are formal, such as performance reviews, dashboards, stage gates, or funding decisions. Others are informal, such as which voices are taken seriously, which risks are tolerated, or which delays are quietly accepted.

Feedback does not need to be explicit to be powerful. It only needs to be consistent.

If a project that delivers quickly but poorly is rewarded more often than a project that delivers thoughtfully but slowly, the system learns something (here). If leadership attention is drawn to certain metrics and not others, the system learns something. If escalation is penalized socially even when it is encouraged procedurally, the system learns something.

Over time, these signals accumulate.

The system begins to favor certain behaviors. It begins to shape decision making before anyone consciously deliberates. People start to anticipate outcomes and adjust accordingly.

At this point, the system no longer needs constant instruction. It has learned what works within its environment.

This is the beginning of emergent behavior.

Emergence without blame

Emergence is often misunderstood as a problem to be fixed. In reality, it is a property of complexity.

No single individual designs emergence. It arises from interaction. From repetition. From local decisions that aggregate into global patterns.

Two teams can operate under the same policy and produce different outcomes. Two organizations can use the same framework and behave in fundamentally different ways. The difference is not the documentation. It is the dynamics.

When we frame emergence as failure, we look for someone to correct. When we frame it as intelligence, we look for patterns to understand.

This shift matters.

It allows leaders to ask different questions. Not “Who caused this?” but “What is the system responding to?” Not “Why are people not following the process?” but “What does the process actually reward in practice?”

Blame freezes learning. Curiosity unlocks it.

When repetition becomes instruction

One of the most powerful forces in any system is repetition.

A behavior that is repeated becomes normalized. A decision that is reinforced becomes expected. A metric that is reviewed becomes meaningful, regardless of its original intent.

Over time, repetition becomes instruction.

This is how systems begin to “teach” their participants what matters.

No memo is required. No training is necessary. The lesson is embedded in experience.

This is why systems can drift even when their stated purpose remains unchanged. The formal language may stay the same, but the lived reality evolves.

Understanding this helps explain why adding more documentation often fails to change outcomes. The system is not listening to what is written. It is responding to what is repeated.

Self reference and internal logic

To go deeper, it helps to borrow a concept from outside traditional management literature.

In Gödel, Escher, Bach, Douglas Hofstadter explores how systems can develop meaning and apparent intelligence through self reference. He examines how formal systems, art, and music can loop back on themselves, creating structures that appear to observe and influence their own behavior.

While Hofstadter’s work spans mathematics, music, and philosophy, the underlying insight travels well into organizational life.

Systems that measure themselves change themselves.

In physics, Isaac Newton described a simple principle: a body in motion stays in motion unless acted upon by an external force. Systems behave the same way. Once a pattern gains momentum, it tends to persist.

When a system uses its own outputs as inputs for future decisions, it begins to develop an internal logic. The system starts responding not just to external goals, but to its own history.

Dashboards inform decisions. Decisions influence future data. That data then reinforces certain interpretations. Over time, the system begins to see the world through the lens it has created.

Metrics as mirrors, not levers

Metrics are often treated as levers. Pull the right one and behavior will follow.

In practice, metrics behave more like mirrors.

They reflect what the system currently values. They also shape what the system notices.

When metrics are introduced, they do more than measure performance. They signal importance. They direct attention. They create narratives about success and failure.

A well chosen metric can support learning. A poorly contextualized metric can narrow perception.

The key insight is that metrics do not simply sit on top of systems. They become part of the system’s feedback loop.

Once this happens, the system starts optimizing for what it sees most clearly.

This is why mature systems periodically revisit not just their targets, but their lenses.

Governance as a living system

Governance is often discussed as structure. In reality, it is behavior shaped over time.

Committees develop norms. Decision thresholds become predictable. Certain types of proposals move more smoothly than others. Over time, governance bodies develop a shared sense of what is acceptable, realistic, or worth pursuing.

This is not rigidity. It is coherence.

Problems arise when governance is treated as static while the environment changes. The system continues to behave intelligently according to outdated signals.

The solution is not to dismantle governance, but to treat it as a living system that requires reflection.

Healthy governance asks not only “Are we following the rules?” but “What behaviors are our rules producing?”

The limits of internal perspective

One of the most subtle challenges in system stewardship is visibility.

Systems are very good at operating from the inside. They are less good at observing themselves.

This is not because people lack insight. It is because participation shapes perception.

When you are inside a system, many of its assumptions feel natural. Its rhythms feel normal. Its constraints feel inevitable.

This is why external perspectives, rotational roles, and reflective practices matter. They introduce fresh reference points. They disrupt self reinforcement.

Hofstadter’s work highlights a similar idea. No system can fully explain itself from within its own rules. There are always truths that sit just outside formal articulation.

In organizational life, this translates into humility. An understanding that no matter how elegant the design, blind spots will exist.

Leadership as stewardship, not control

If systems naturally develop internal logic, what is the role of leadership?

Not control. Not constant intervention. Not endless redesign.

The role is stewardship.

Stewardship involves sensing patterns, not policing behavior. It involves tuning signals rather than issuing directives. It requires leaders to pay attention to how decisions echo through the system over time.

This is a quieter form of leadership. It values observation. It rewards patience. It accepts that influence often works indirectly.

Stewards ask questions like:

What behaviors are consistently rewarded here?

What signals travel fastest through the system?

Where do small changes create outsized effects?

What assumptions no longer match our environment?

These are not questions of blame. They are questions of alignment.

Designing for learning, not perfection

One of the most liberating insights for system designers is that perfection is neither possible nor desirable.

Systems that are too tightly specified struggle to adapt. Systems that allow room for interpretation can learn.

This does not mean abandoning rigor. It means pairing structure with reflection.

Learning oriented systems build in pauses. They revisit assumptions. They create space for sense making.

This is especially important in environments marked by complexity, uncertainty, and interdependence. In such contexts, responsiveness matters more than predictability.

The goal shifts from enforcing correctness to cultivating awareness.

The human role in thinking systems

It is tempting to talk about systems as though humans disappear. In reality, people are central.

Humans interpret signals. Humans make tradeoffs. Humans notice anomalies.

What changes is the nature of agency.

In thinking systems, agency is distributed. No single person controls outcomes, but everyone influences them.

This can feel uncomfortable in cultures that prize authority and clarity. But it is also empowering. It means leadership is not confined to titles. It lives in attention.

The most effective contributors in such systems are often those who notice patterns early and articulate them clearly.

The opportunity hidden in emergence

When systems start thinking for themselves, they reveal something valuable.

They show you what truly matters in practice.

They surface the difference between stated values and lived values. They expose misalignments gently, through repetition rather than confrontation.

If you are willing to listen, emergent behavior becomes a diagnostic tool.

It tells you where incentives conflict. Where structures oversimplify. Where assumptions no longer hold.

The opportunity is not to suppress emergence, but to learn from it.

Reflective questions for system stewards

To close, here are questions that support curiosity rather than correction:

What outcomes does our system reliably produce, regardless of intent?

Which behaviors feel effortless here, and which feel costly?

What does our system notice quickly, and what does it overlook?

How does our data shape our conversations?

Where might repetition be teaching something we did not explicitly choose?

These questions do not demand immediate answers. They invite ongoing attention.

Systems do not think the way humans do. They do not intend. They do not judge. They do not aspire.

But they do learn.

They learn from repetition. From feedback. From what is reinforced and what is ignored.

When we recognize this, our relationship with systems changes. We move from frustration to stewardship. From control to understanding. From blame to curiosity.

And in that shift, we gain something rare.

Hope this helps.

Nicole

Exactly that! I recommend you to read my boundary declaration that is linked in this article. Published on zenodo as a second order epistemic boundary declaration. https://open.substack.com/pub/thecoherenceledger/p/we-are-still-looking-at-the-wrong?utm_source=share&utm_medium=android&r=5yewhm